StatsTest Blog

Experimental design, data analysis, and statistical tooling for modern teams. No hype, just the math.

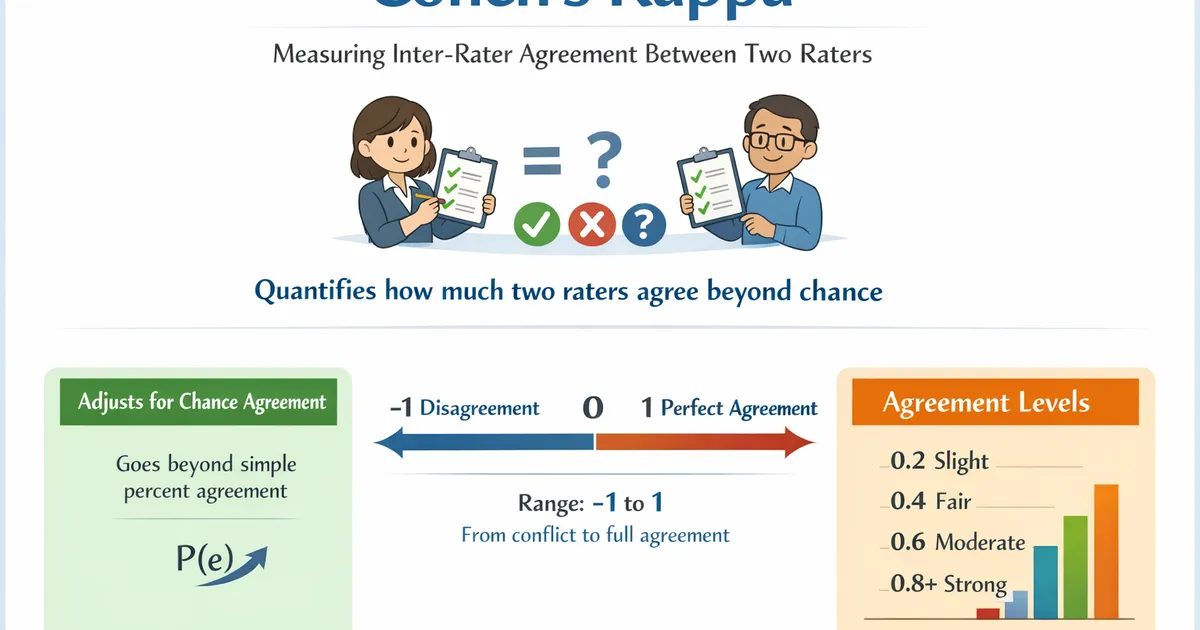

Cohen's Kappa

Cohen's Kappa measures inter-rater agreement between two raters classifying items into categories. Use it to quantify how much two raters agree beyond what would be expected by chance.

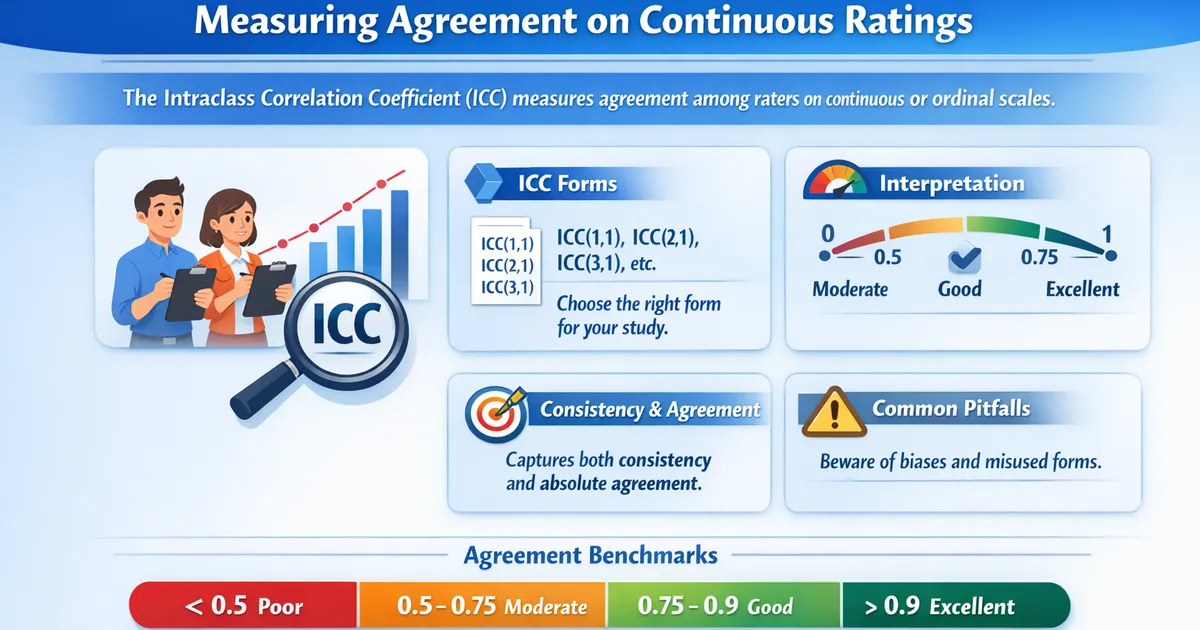

Intraclass Correlation: Measuring Agreement on Continuous Ratings

Intraclass Correlation Coefficient (ICC) measures agreement among raters on continuous or ordinal scales. Learn which ICC form to use, how to interpret values, and common pitfalls.

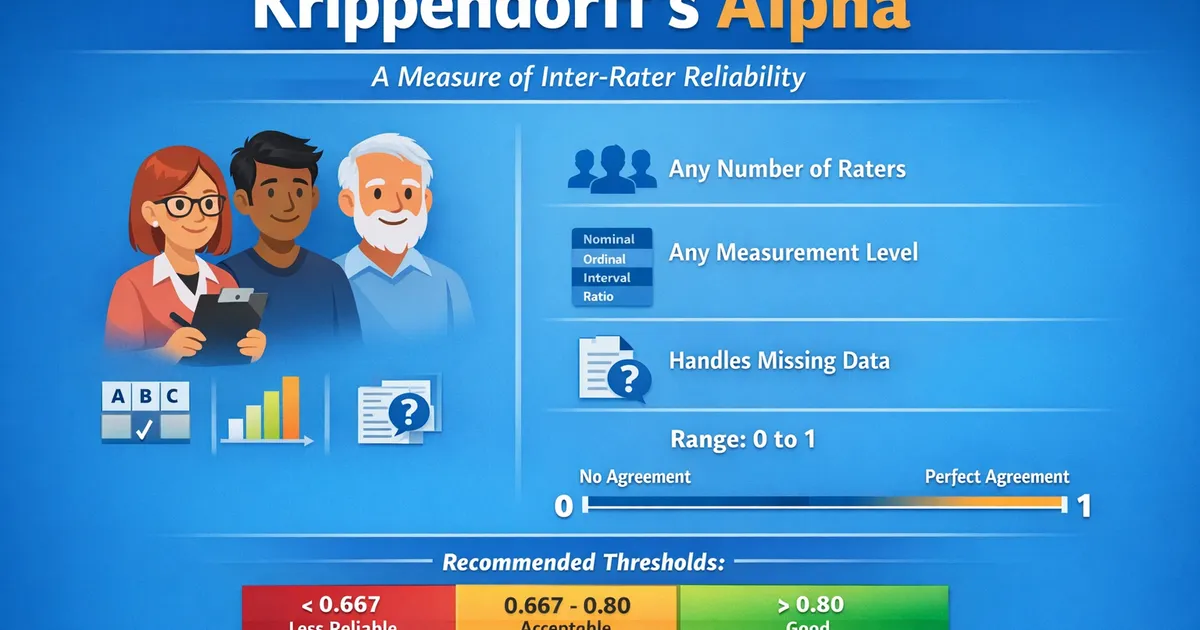

Krippendorff's Alpha

Krippendorff's Alpha measures inter-rater reliability for any number of raters, any number of categories, and any measurement level. Use it as a general-purpose agreement statistic.

Cramer's V

Cramer's V can be used to understand the strength of the relationship between two categorical variables with two or more unique values per variable.

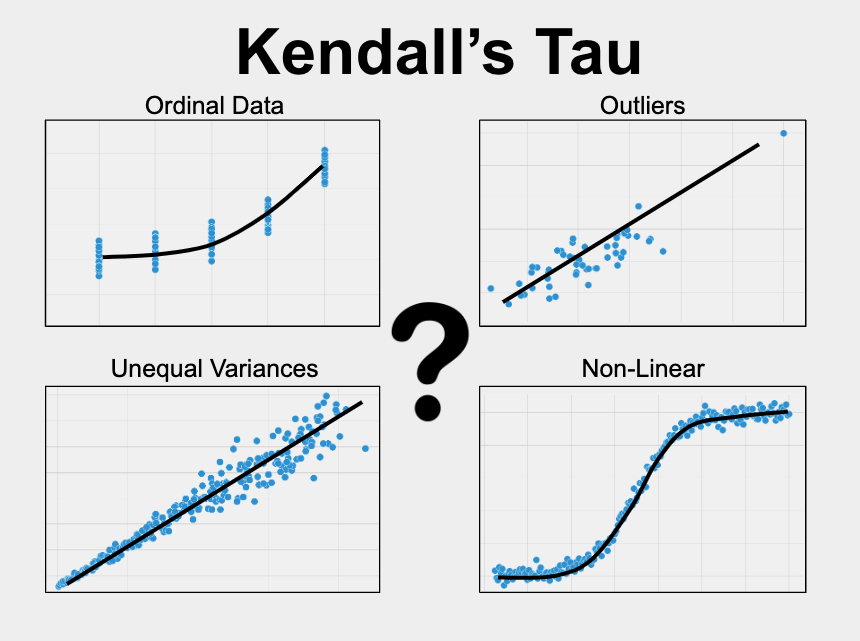

Kendall's Tau

Kendall's Tau measures the relationship between two variables when one or more of the variables is ordinal, non-linear, skewed, or has outliers.

Partial Correlation

Partial Correlation is used to examine the relationship between two continuous variables while accounting for the effects of one or more other variables.

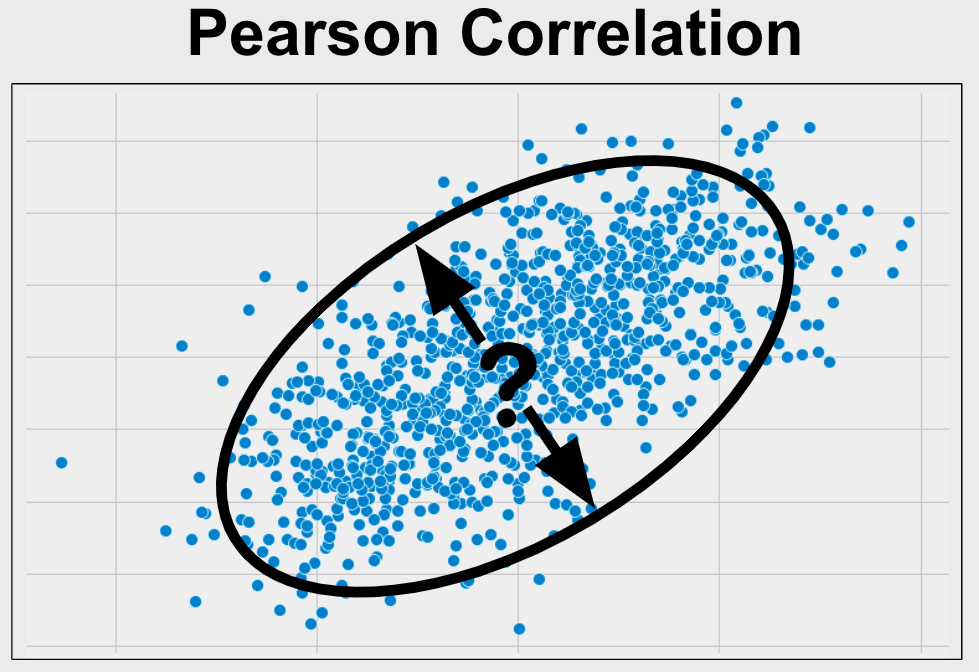

Pearson Correlation

Pearson Correlation is used to measure the relationship between two continuous variables when they are not skewed, ordinal, and don't have outliers.

Phi Coefficient

The Phi Coefficient can be used to determine the strength of the relationship between two binary variables.

Point-Biserial Correlation

Point biserial correlation is used to measure the relationship between two variables when one variable is binary and the other is continuous.