Contents

Intraclass Correlation: Measuring Agreement on Continuous Ratings

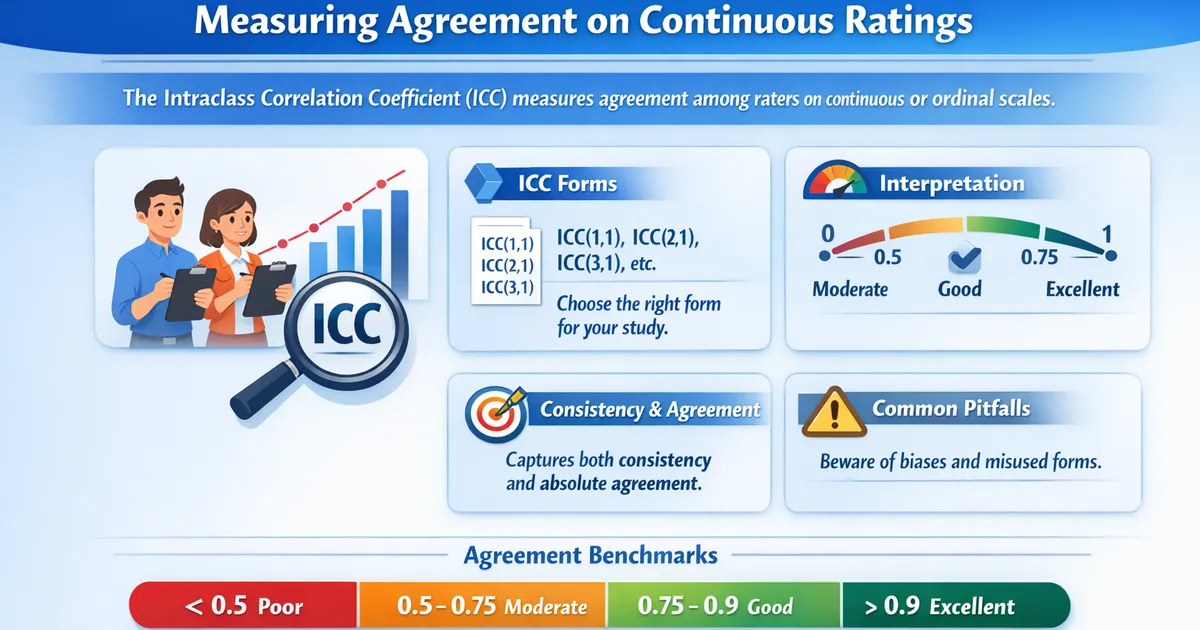

Intraclass Correlation Coefficient (ICC) measures agreement among raters on continuous or ordinal scales. Learn which ICC form to use, how to interpret values, and common pitfalls.

Quick Hits

- •ICC measures agreement among raters on continuous or ordinal scales

- •Unlike Pearson correlation, ICC captures both consistency and absolute agreement

- •Multiple forms: ICC(1,1), ICC(2,1), ICC(3,1), etc. — choose based on your design

- •Values range from 0 (no agreement) to 1 (perfect agreement)

- •Benchmarks: < 0.5 poor, 0.5-0.75 moderate, 0.75-0.9 good, > 0.9 excellent

When raters provide continuous scores (e.g., quality ratings from 1-10, response time in seconds, readability scores), you need the Intraclass Correlation Coefficient (ICC) to measure their agreement.

Why Not Just Use Pearson Correlation?

Pearson Correlation measures whether scores move together linearly. But two raters can have r = 1.0 while consistently disagreeing:

| Item | Rater A | Rater B |

|---|---|---|

| 1 | 3 | 5 |

| 2 | 5 | 7 |

| 3 | 7 | 9 |

Pearson r = 1.0, but Rater B is always 2 points higher. The ICC would be substantially below 1.0, reflecting this systematic disagreement.

Choosing the Right ICC Form

The choice depends on your study design:

| Design | ICC Form | Use When |

|---|---|---|

| One-way random | ICC(1,1) | Different raters for each subject |

| Two-way random, absolute | ICC(2,1) | Same raters, raters are a sample, care about absolute agreement |

| Two-way mixed, consistency | ICC(3,1) | Same raters, raters are fixed, care about rank consistency |

The "(1)" versions give single-measure reliability; "(k)" versions give average-measure reliability (appropriate when the final score will be the average of k raters).

Interpretation

| ICC Value | Agreement Level |

|---|---|

| < 0.50 | Poor |

| 0.50 - 0.75 | Moderate |

| 0.75 - 0.90 | Good |

| > 0.90 | Excellent |

Example

Five evaluators rate 50 AI-generated responses on a 1-7 quality scale. Using ICC(2,1) for absolute agreement:

ICC = 0.71, 95% CI [0.58, 0.81]

Interpretation: Moderate agreement. The evaluators have reasonable but imperfect consistency. The wide confidence interval suggests that more items or more raters could improve precision. Consider calibration sessions to reduce systematic differences between raters.

See also: Cohen's Kappa for categorical ratings and Krippendorff's Alpha for a flexible alternative that handles any measurement level.

References

- https://www.ncbi.nlm.nih.gov/pmc/articles/PMC4913118/

- https://www.sciencedirect.com/science/article/pii/S0895435615002285

Frequently Asked Questions

When should I use ICC instead of Pearson correlation?

Which ICC form should I use?

What if I have ordinal data?

Key Takeaway

The Intraclass Correlation Coefficient is the standard measure of agreement for continuous ratings. It captures both the consistency of ranking and the absolute level of agreement, making it more appropriate than Pearson correlation for reliability studies. Choose the right ICC form based on whether raters are random or fixed and whether you care about consistency or absolute agreement.