Contents

Krippendorff's Alpha

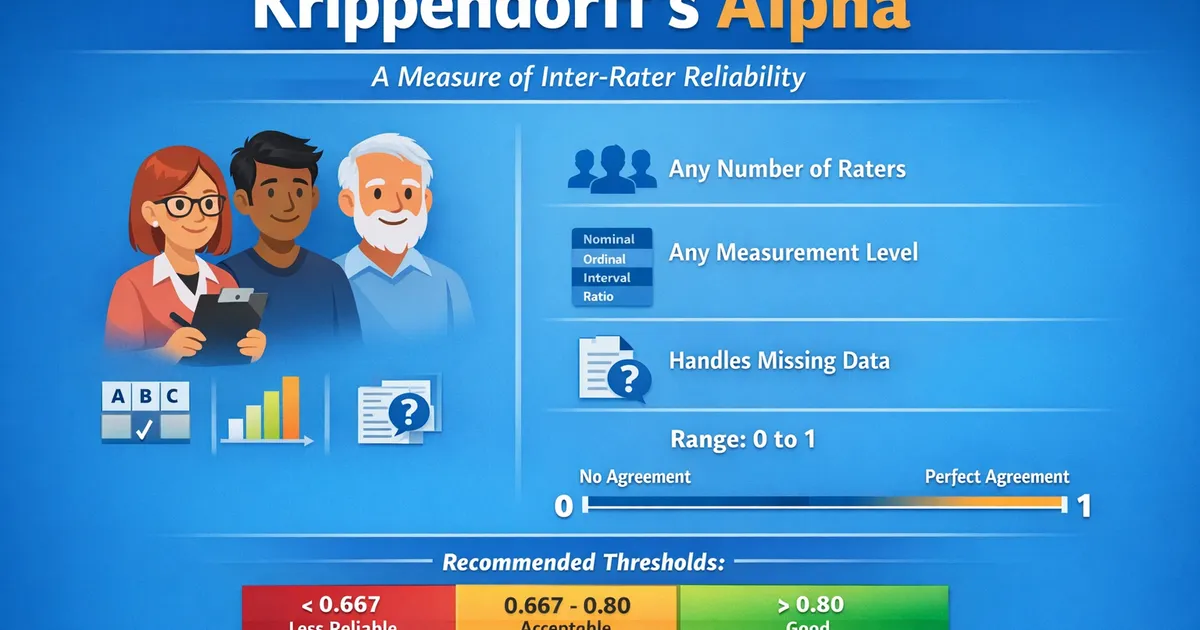

Krippendorff's Alpha measures inter-rater reliability for any number of raters, any number of categories, and any measurement level. Use it as a general-purpose agreement statistic.

Quick Hits

- •Works with any number of raters (2 or more)

- •Handles any measurement level: nominal, ordinal, interval, or ratio

- •Properly handles missing data (not all raters need to rate every item)

- •Ranges from 0 (no agreement beyond chance) to 1 (perfect agreement)

- •Recommended thresholds: > 0.80 good, 0.667-0.80 acceptable for tentative conclusions

The StatsTest Flow: Relationship or Prediction >> Relationship >> Agreement between raters >> Two or more raters

Not sure this is the right statistical method? Use the Choose Your StatsTest workflow to select the right method.

What is Krippendorff's Alpha?

Krippendorff's Alpha () is a reliability coefficient that measures the agreement among raters who assign categorical, ordinal, interval, or ratio values to units of analysis. It generalizes several other agreement statistics (including Cohen's kappa, Scott's pi, and Fleiss' kappa) into a single framework.

The statistic compares observed disagreement () to expected disagreement by chance ():

Krippendorff's Alpha is also called Krippendorff's Reliability Coefficient or simply Alpha.

Assumptions for Krippendorff's Alpha

The assumptions include:

- Two or More Raters

- Same Units of Analysis

- Independent Ratings

- Appropriate Measurement Level Specified

Two or More Raters

Unlike Cohen's kappa (which requires exactly two raters), Krippendorff's alpha works with any number of raters.

Same Units of Analysis

Raters should be classifying or scoring the same set of items. However, not every rater needs to rate every item, as Krippendorff's alpha handles missing data properly.

Independent Ratings

Each rater must make judgments independently without being influenced by other raters.

Appropriate Measurement Level

You must specify whether the data is nominal, ordinal, interval, or ratio. This affects how disagreement is calculated. Nominal treats all disagreements equally. Ordinal and interval incorporate the magnitude of disagreement.

When to use Krippendorff's Alpha?

- You have two or more raters classifying or rating the same items

- You want a flexible, all-purpose agreement measure

- Your data may be nominal, ordinal, interval, or ratio

- Some raters may have missing data (not every rater rated every item)

If you have exactly two raters with complete data on a nominal scale, Cohen's Kappa is a simpler alternative.

Krippendorff's Alpha Example

Five human evaluators rate the quality of 100 AI-generated summaries on a 5-point ordinal scale (1 = poor, 5 = excellent). Not every evaluator rated every summary; on average each summary was rated by 3.2 evaluators.

Using Krippendorff's alpha with ordinal measurement level: = 0.72.

Interpretation: = 0.72 falls in the "acceptable for tentative conclusions" range (0.667-0.80). The evaluators agree reasonably well but there is meaningful disagreement, likely on borderline-quality summaries. Improvements could include clearer rubrics and calibration sessions before rating.

References

- https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3900058/

- https://repository.upenn.edu/asc_papers/43/

Frequently Asked Questions

When should I use Krippendorff's alpha instead of Cohen's kappa?

What does alpha = 0 mean?

Can I use Krippendorff's alpha with continuous ratings?

Key Takeaway

Krippendorff's alpha is the most flexible inter-rater reliability measure. It handles any number of raters, any measurement level, and missing data. Use it as your default reliability statistic unless you have a specific reason to use Cohen's kappa (two raters, nominal scale, no missing data).