StatsTest Blog

Experimental design, data analysis, and statistical tooling for modern teams. No hype, just the math.

RelationshipJan 29New

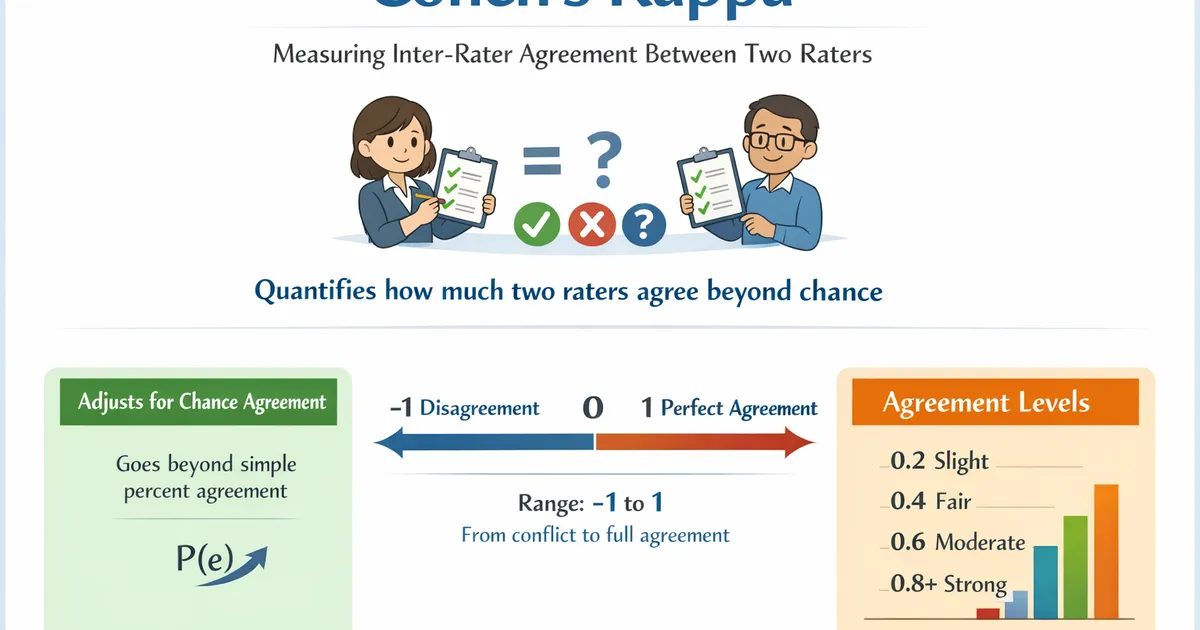

Cohen's Kappa

Cohen's Kappa measures inter-rater agreement between two raters classifying items into categories. Use it to quantify how much two raters agree beyond what would be expected by chance.