Contents

When Confidence Intervals and P-Values Seem to Disagree

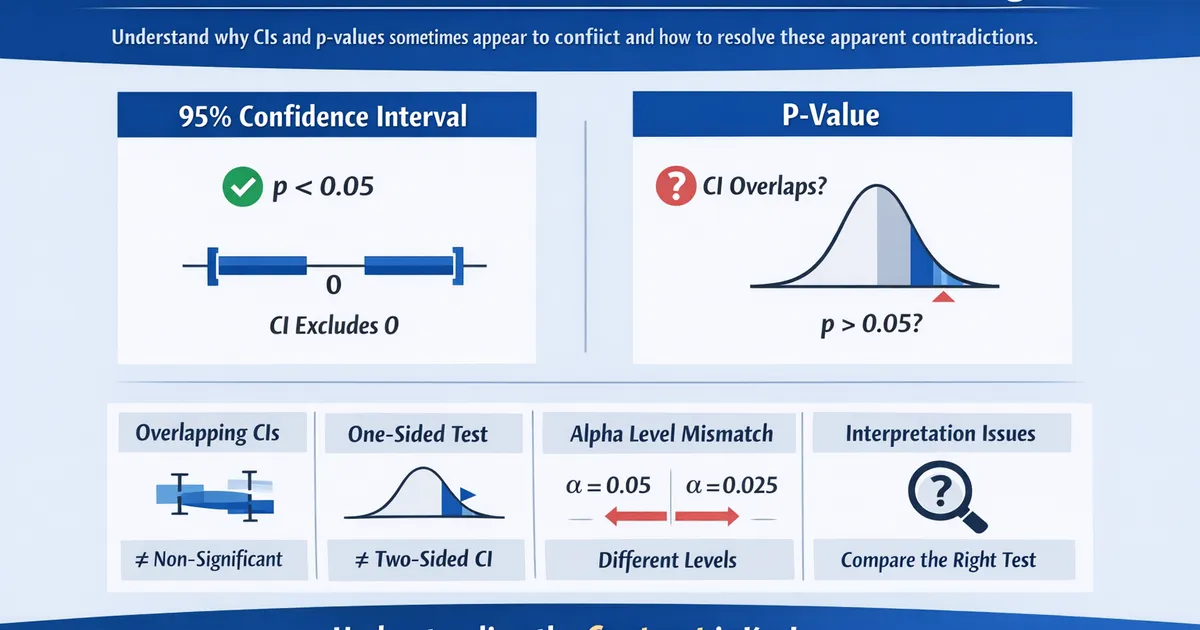

Understand why CIs and p-values sometimes appear to conflict and how to resolve these apparent contradictions. Learn common scenarios and the correct interpretation.

Quick Hits

- •95% CI excluding 0 ⟺ p < 0.05 for two-sided tests of difference = 0

- •Apparent conflicts usually involve comparing the wrong things

- •Overlapping group CIs ≠ non-significant difference

- •One-sided p-values and two-sided CIs don't match directly

TL;DR

When p-values and confidence intervals seem to contradict each other, there's almost always a mismatch in what's being compared. The core rule: for two-sided tests with , a 95% CI that excludes 0 always means , and vice versa. Common "conflicts" involve overlapping group CIs (which don't imply non-significance), one-sided vs. two-sided comparisons, or different alpha levels.

The Fundamental Relationship

They're Mathematically Linked

import numpy as np

from scipy import stats

np.random.seed(42)

control = np.random.normal(100, 15, 50)

treatment = np.random.normal(108, 15, 50)

diff = np.mean(treatment) - np.mean(control)

se = np.sqrt(np.var(control, ddof=1)/50 + np.var(treatment, ddof=1)/50)

# Two-sided t-test

t_stat, p_value = stats.ttest_ind(control, treatment)

# 95% CI for difference

t_crit = stats.t.ppf(0.975, 98)

ci = (diff - t_crit * se, diff + t_crit * se)

Whether the 95% CI excludes 0 and whether will always agree for a two-sided test. If one says "significant," the other must too. If they don't, something is wrong with the calculation.

Why They Must Agree

The 95% CI contains all values where the p-value for testing would be .

Therefore:

- If 0 is outside the CI → for

- If 0 is inside the CI → for

The CI is an inverted hypothesis test: it's the set of null values you would not reject.

The same logic applies at other levels:

- 99% CI ↔

- 90% CI ↔

Common "Conflicts" (That Aren't Really Conflicts)

Scenario 1: Overlapping Group CIs

The most common source of confusion.

np.random.seed(42)

n = 50

control = np.random.normal(100, 15, n)

treatment = np.random.normal(107, 15, n)

# Individual group CIs

def group_ci(data):

m = np.mean(data)

se = np.std(data, ddof=1) / np.sqrt(len(data))

return (m - 1.96*se, m + 1.96*se)

control_ci = group_ci(control)

treatment_ci = group_ci(treatment)

# CI for the difference

diff = np.mean(treatment) - np.mean(control)

se_diff = np.sqrt(np.var(control, ddof=1)/n + np.var(treatment, ddof=1)/n)

diff_ci = (diff - 1.96*se_diff, diff + 1.96*se_diff)

_, p = stats.ttest_ind(control, treatment)

The individual group CIs may overlap, yet the CI for the difference can still exclude zero (and the p-value can be significant). This is the most common source of confusion.

The key insight: The standard error of a difference is smaller than you'd guess from looking at individual CIs:

So a difference can be significant even with overlapping group CIs. Always look at the CI for the difference directly.

Scenario 2: One-Sided vs. Two-Sided

np.random.seed(42)

n = 30

control = np.random.normal(100, 15, n)

treatment = np.random.normal(106, 15, n)

diff = np.mean(treatment) - np.mean(control)

se = np.sqrt(np.var(control, ddof=1)/n + np.var(treatment, ddof=1)/n)

# Two-sided test and CI

t_stat = diff / se

p_two = 2 * (1 - stats.t.cdf(abs(t_stat), 2*n-2))

ci_95 = (diff - 1.96*se, diff + 1.96*se)

# One-sided test (treatment > control)

p_one = 1 - stats.t.cdf(t_stat, 2*n-2)

The 95% two-sided CI might include 0, yet the one-sided p-value can be less than 0.05. This looks like a conflict but isn't.

Resolution: A two-sided 95% CI corresponds to a two-sided test at . A one-sided test at corresponds to a one-sided 90% CI (or the lower/upper bound of a 90% two-sided CI). Match the CI type to the test type.

Scenario 3: Different Alpha Levels

np.random.seed(42)

n = 50

data = np.random.normal(105, 30, n)

mean = np.mean(data)

se = np.std(data, ddof=1) / np.sqrt(n)

# Test against H₀: μ = 100

t_stat = (mean - 100) / se

p = 2 * (1 - stats.t.cdf(abs(t_stat), n-1))

# Different CIs

ci_90 = (mean - 1.645*se, mean + 1.645*se)

ci_95 = (mean - 1.96*se, mean + 1.96*se)

ci_99 = (mean - 2.576*se, mean + 2.576*se)

Each CI level matches to the corresponding alpha level:

- ↔ 90% CI excludes the null value

- ↔ 95% CI excludes the null value

- ↔ 99% CI excludes the null value

If you compare a p-value at one alpha level with a CI at a different confidence level, they may appear to disagree. Always match the CI confidence level to the alpha level you're testing.

Resolution Guide

Group CIs overlap but difference is significant. You're comparing apples to oranges. Look at the CI for the difference, not individual group CIs.

One-sided p < 0.05 but 95% CI includes null. You're mixing one-sided and two-sided. Use a one-sided CI or a 90% two-sided CI for a one-sided test.

P-value says significant but CI seems to include null. Possibly different alpha levels. Match the CI confidence level to alpha (95% CI ↔ ).

Different software gives different results. Different approximations or methods. Check what exact method each uses; prefer the same method for both.

CI excludes null but effect seems trivial. This is not a conflict — it's the significance/importance gap. CI and p-value agree on significance; you're questioning practical significance.

Checking for True Agreement

def check_agreement(diff, se, null=0, alpha=0.05, sided='two'):

"""

Check that CI and p-value agree.

"""

# P-value

z = (diff - null) / se

if sided == 'two':

p = 2 * (1 - stats.norm.cdf(abs(z)))

z_crit = stats.norm.ppf(1 - alpha/2)

else:

p = 1 - stats.norm.cdf(z)

z_crit = stats.norm.ppf(1 - alpha)

# CI

if sided == 'two':

ci = (diff - z_crit * se, diff + z_crit * se)

ci_excludes_null = ci[0] > null or ci[1] < null

else:

ci_lower = diff - z_crit * se

ci = (ci_lower, float('inf'))

ci_excludes_null = ci_lower > null

# Agreement check

p_significant = p < alpha

print("AGREEMENT CHECK")

print("-" * 40)

print(f"Null hypothesis value: {null}")

print(f"Alpha level: {alpha}")

print(f"Test type: {sided}-sided")

print()

print(f"Effect: {diff:.3f}")

print(f"Standard Error: {se:.3f}")

print()

print(f"P-value: {p:.4f}")

print(f" Significant (p < {alpha}): {p_significant}")

print()

print(f"CI: [{ci[0]:.3f}, {ci[1] if ci[1] != float('inf') else '∞'}]")

print(f" Excludes {null}: {ci_excludes_null}")

print()

if p_significant == ci_excludes_null:

print("✓ AGREEMENT: CI and p-value tell the same story")

else:

print("⚠ DISAGREEMENT: Check your calculations!")

return {'p': p, 'ci': ci, 'agree': p_significant == ci_excludes_null}

# Example

check_agreement(diff=5.2, se=2.1, null=0, alpha=0.05, sided='two')

Common Pitfalls

Eyeballing overlapping CIs. Assuming overlap means "not significant." CIs can overlap and the difference can still be significant. Always calculate the CI for the difference directly.

Using different software. Getting the p-value from one tool and the CI from another. Methods may differ (exact vs. approximate, etc.). Use the same tool and method for both.

Rounding errors. The CI just touches 0, the p-value just under 0.05. Rounding can make them seem to disagree. Use more decimal places for comparison.

Wrong CI for the question. Testing a difference but looking at individual CIs. You need the CI for what you're testing. Match the CI to the parameter in your hypothesis.

R Implementation

# Checking CI/p-value agreement in R

check_agreement <- function(diff, se, null = 0, alpha = 0.05) {

cat("AGREEMENT CHECK\n")

cat(rep("=", 40), "\n\n")

# P-value (two-sided)

z <- (diff - null) / se

p <- 2 * (1 - pnorm(abs(z)))

# 95% CI

z_crit <- qnorm(1 - alpha/2)

ci_low <- diff - z_crit * se

ci_high <- diff + z_crit * se

# Check

p_sig <- p < alpha

ci_excludes <- (ci_low > null) | (ci_high < null)

cat(sprintf("Effect: %.3f (SE: %.3f)\n", diff, se))

cat(sprintf("P-value: %.4f (sig at alpha = %.2f: %s)\n", p, alpha, p_sig))

cat(sprintf("95%% CI: [%.3f, %.3f] (excludes %.1f: %s)\n",

ci_low, ci_high, null, ci_excludes))

cat("\n")

if (p_sig == ci_excludes) {

cat("✓ AGREEMENT\n")

} else {

cat("⚠ DISAGREEMENT - check calculations!\n")

}

}

# Usage:

# check_agreement(diff = 5.2, se = 2.1)

Related Methods

- Effect Sizes Master Guide — The pillar article

- P-Values vs CIs — Understanding both

- Practical Significance — Beyond significance

Key Takeaway

When CIs and p-values seem to disagree, it's almost always because you're comparing mismatched quantities: overlapping group CIs (when you need the CI for the difference), one-sided tests with two-sided CIs, or different alpha levels. For two-sided tests at , a 95% CI that excludes the null ALWAYS corresponds to . When in doubt, compute both using the same method and verify they match—if they don't, something's wrong with your calculation.

References

- https://doi.org/10.1136/bmj.d2304

- https://www.jstor.org/stable/2683359

- Schenker, N., & Gentleman, J. F. (2001). On judging the significance of differences by examining the overlap between confidence intervals. *The American Statistician*, 55(3), 182-186.

- Cumming, G., & Finch, S. (2005). Inference by eye: Confidence intervals and how to read pictures of data. *American Psychologist*, 60(2), 170-180.

- Greenland, S., et al. (2016). Statistical tests, P values, confidence intervals, and power: a guide to misinterpretations. *European Journal of Epidemiology*, 31(4), 337-350.

Frequently Asked Questions

Can a 95% CI exclude 0 but p > 0.05?

Why do overlapping confidence intervals sometimes show significant difference?

What if I have a one-sided test but a two-sided CI?

Key Takeaway

When CIs and p-values seem to disagree, it's usually a comparison mismatch: overlapping group CIs vs. CI for difference, one-sided vs. two-sided tests, or different alpha levels. For two-sided tests against null = 0, a 95% CI that excludes 0 always corresponds to p < 0.05. When in doubt, focus on the CI for the parameter you care about.