Contents

Agreement Between Raters

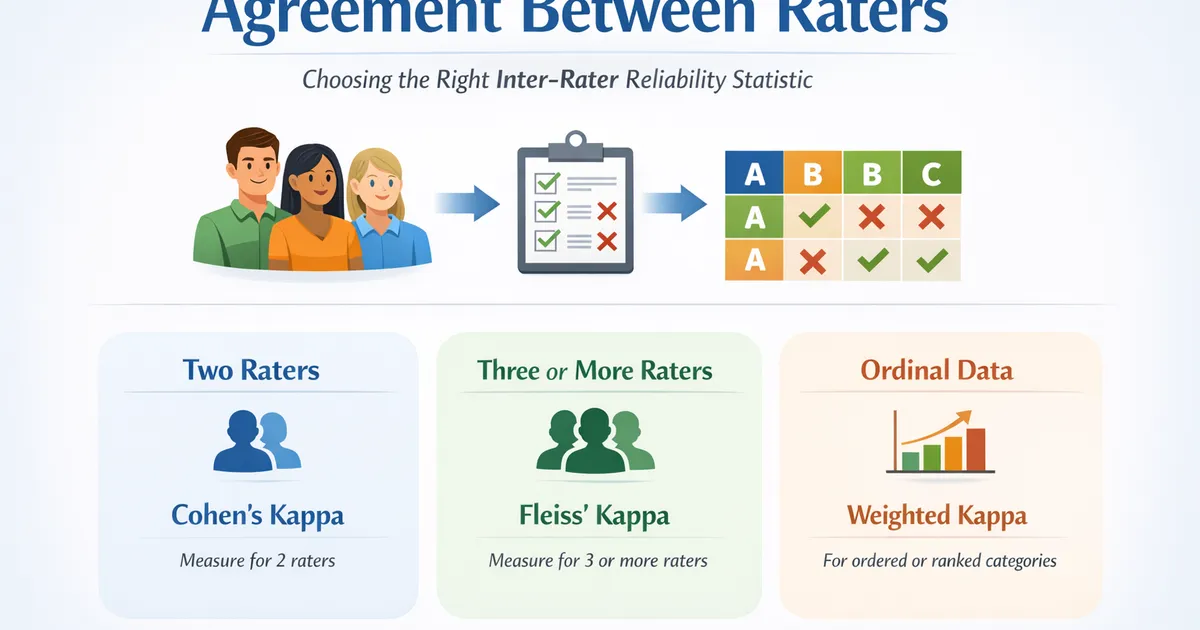

How many raters do you have? Choose the right inter-rater reliability statistic for measuring agreement between raters or coders who classify items into categories.

How many raters do you have?

- OR -

More Information (if you need help deciding)

Exactly two raters: Use Cohen's Kappa when you have exactly two raters who both classify the same set of items into categories. Cohen's kappa measures agreement corrected for chance and is the most widely used agreement statistic for the two-rater case. Use weighted kappa when your categories are ordinal (have a natural order).

Two or more raters: Use Krippendorff's Alpha when you have any number of raters (two or more), when some raters may not have rated every item (missing data), or when your measurement scale is ordinal, interval, or ratio. Krippendorff's alpha is the most flexible reliability coefficient and works as a general-purpose alternative to Cohen's kappa, Fleiss' kappa, and other specialized statistics.